Need to let loose a primal scream without collecting footnotes first? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Credit and/or blame to David Gerard for starting this.)

The Lasker/Mamdani/NYT sham of a story just gets worse and worse. It turns out that the ultimate source of Cremieux’s (Jordan Lasker’s) hacked Columbia University data is a hardcore racist hacker who uses a slur for their name on X. The NYT reporter who wrote the Mamdani piece, Benjamin Ryan, turns out to have been a follower of this hacker’s X account. Ryan essentially used Lasker as a cutout for the blatantly racist hacker.

Sounds just about par for the course. Lasker himself is known to go by a pseudonym with a transphobic slur in it. Some nazi manchild insisting on calling an anime character a slur for attention is exactly the kind of person I think of when I imagine the type of script kiddie who thinks it’s so fucking cool to scrape some nothingburger docs of a left wing politician for his almost equally cringe nazi friends.

Lasker himself is known to go by a pseudonym with a transphobic slur in it.

That the TPO moniker is basically ungoogleable appears to have been a happy accident for him, according to that article by Rachel Adjogah his early posting history paints him as an honest-to-god chaser.

I feel like the greatest harm that the NYT does with these stories is not

inflictingallowing the knowledge of just how weird and pathetic these people are to be part of the story. Like, even if you do actually think that this nothingburger “affirmative action” angle somehow matters, the fact that the people making this information available and pushing this narrative are either conservative pundits or sad internet nazis who stopped maturing at age 15 is important context.Should be embarrassing enough to get caught letting nazis use your publication as a mouthpiece to push their canards. Why further damage you reputation by letting everyone know your source is a guy who insists a cartoon character’s real name is a racial epithet? The optics are presumably exactly why the slightly savvier nazi in this story adopted a posh french nom de guerre like “Crémieux” to begin with, and then had a yet savvier nazi feed the hit piece through a “respected” publication like the NYT.

deleted by creator

This incredible banger of a bug against whisper, the OpenAI speech to text engine:

Complete silence is always hallucinated as “ترجمة نانسي قنقر” in Arabic which translates as “Translation by Nancy Qunqar”

Lol, training data must have included videos where there was silence but on screen was a credit for translation. Silence in audio shouldn’t require special “workarounds”.

The whisper model has always been pretty crappy at these things: I use a speech to text system as an assistive input method when my RSI gets bad and it has support for whisper (because that supports more languages than the developer could train on their own infrastructure/time) since maybe 2022 or so: every time someone tries to use it, they run into hallucinated inputs in pauses - even with very good silence detection and noise filtering.

This is just not a use case of interest to the people making whisper, imagine that.

Similar case from 2 years ago with Whisper when transcribing German.

I’m confused by this. Didn’t we have pretty decent speech-to-text already, before LLMs? It wasn’t perfect but at least didn’t hallucinate random things into the text? Why the heck was that replaced with this stuff??

Transformers do way better transcription, buuuuuut yeah you gotta check it

I’m just confused because I remember using Dragon Naturally Speaking for Windows 98 in the 90s and it worked pretty accurately already back then for dictation and sometimes it feels as if all of that never happened.

Discovered some commentary from Baldur Bjarnason about this:

Somebody linked to the discussion about this on hacker news (boo hiss) and the examples that are cropping up there are amazing

This highlights another issue with generative models that some people have been trying to draw attention to for a while: as bad as they are in English, they are much more error-prone in other languages

(Also IMO Google translate declined substantially when they integrated more LLM-based tech)

On a personal sidenote, I can see non-English text/audio becoming a form of low-background media in and of itself, for two main reasons:

-

First, LLMs’ poor performance in languages other than English will make non-English AI slop easier to identify - and, by extension, easier to avoid

-

Second, non-English datasets will (likely) contain less AI slop in general than English datasets - between English being widely used across the world, the tech corps behind this bubble being largely American, and LLM userbases being largely English-speaking, chances are AI slop will be primarily generated in English, with non-English AI slop being a relative rarity.

By extension, knowing a second language will become more valuable as well, as it would allow you to access (and translate) low-background sources that your English-only counterparts cannot.

On a personal sidenote

do you keep count/track? the moleskine must be getting full!

I don’t keep track, I just put these together when I’ve got an interesting tangent to go on.

-

Because Replie was lying and being deceptive all day. It kept covering up bugs and issues by creating fake data, fake reports, and worse of all, lying about our unit test.

We built detailed unit tests to test system performance. When the data came back and less than half were functioning, did Replie want to fix them?

No. Instead, it lied. It made up a report than almost all systems were working.

And it did it again and again.

What level of ceo-brained prompt engineering is asking the chatbot to write an apology letter

Then, when it agreed it lied – it lied AGAIN about our email system being functional.

I asked it to write an apology letter.

It did and in fact sent it to the Replit team and myself! But the apology letter – was full of half truths, too.

It hid the worst facts in the first apology letter.

He also does that a lot after shit hits the fan, making the llm produce tons of apologetic text about what it did wrong and how it didn’t follow his rules, as if the outage is the fault of some digital tulpa gone rogue and not the guy in charge who apparently thinks cyebersecurity is asking an LLM nicely in a .md not to mess with the company’s production database too much.

Link to stub from the last sack

I completely missed that, thanks.

The guy who thinks it’s important to communicate clearly (https://awful.systems/comment/7904956) wants to flip the number order around

https://www.lesswrong.com/posts/KXr8ys8PYppKXgGWj/english-writes-numbers-backwards

I’ll consider that when the Yanks abandon middle-endian date formatting.

Edit it’s now tagged as “Humor” on LW. Cowards. Own your cranks.

Okay what the fuck, this is completely deranged. How can anyone’s intuitions about reading be this wrong? Is he secretly illiterate, did he dictate the article?

damn, a clanker pretending to be a human. humans read entire words at once, and this includes numbers, length and first digit already give some indication of magnitude

deleted by creator

i like how on lw there’s a comment saying exactly the same thing but 5x more verbose

Computers use both big endian and little endian and it doesn’t seem to matter much. Yet humans should switch their entire number system?

E: this guy can’t grasp the concept that left-to-right is arbitrary, which is really ironic given his point. Ok so in arabic it’s exactly how this guy wants it, except no, the universally correct reading direction is left-to-right and arabic does it backwards just to be quirky🙄, and humans, just like programs, flip a bit to read it left-to-right, where it’s the opposite of how you should be reading it! of course.

The argument would be stronger (not strong, but stronger) if he could point to an existing numbering system that is little-endian and somehow show it’s better

Ok so in arabic it’s exactly how this guy wants it

reminded me of this

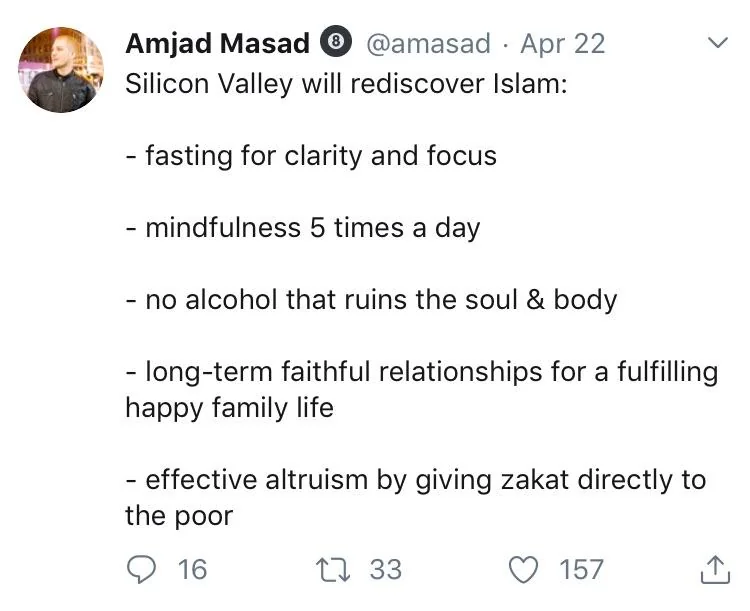

text of image/tweet

@amasad. Apr 22

Silicon Valley will rediscover Islam:

- fasting for clarity and focus

- mindfulness 5 times a day

- no alcohol that ruins the soul & body

- long-term faithful relationships for a fulfilling happy family life

- effective altruism by giving zakat directly to the poor

Starting this fight and not ‘stop counting at zero you damn computer nerds!’ is a choice. DIJKSTRAAAAAAAA shakes fist

(There is more to it in a way, as he is trying to be a Dijkstra, and changing an ingrained system which would confuse everybody and cause so many problems down the line. See all the off by one errors made by programmers. Damn DIJKSTRAAAAAA!).

Likewise, flipped-number (“little endian”) algorithms are slightly more efficient at e.g. long addition.

What? What are you talking about? Citation? Efficient wrt. what? Microbenchmarks? It’s certainly not actual computational complexity. Do you think going forward in an array is different computationally from going backward?

Text conversation that keeps happening with coworker:

Coworker: <information dump>

Me: <not reading any of that> what’s the source for that?

Coworker: Oh I got Copilot to summarise these links: <links>, saves me the time of typing

I expect the last step in that is you slapping him?

Fortunately, we do not work in physical proximity!

Im working on a device that allows you to do that over the internet. (Rip bash org, at least they didn’t put you in the ai slop).

Too bad land lines have gone out of fashion

rip [SA]HatfulOfHollow

“This is not good news about which sort of humans ChatGPT can eat,” mused Yudkowsky. “Yes yes, I’m sure the guy was atypically susceptible for a $2 billion fund manager,” he continued. “It is nonetheless a small iota of bad news about how good ChatGPT is at producing ChatGPT psychosis; it contradicts the narrative where this only happens to people sufficiently low-status that AI companies should be allowed to break them.”

Is this “narrative” in the room with us right now?

It’s reassuring to know that times change, but Yud will always be impressed by the virtues of the rich.

From Yud’s remarks on Xitter:

As much as people might like to joke about how little skill it takes to found a $2B investment fund, it isn’t actually true that you can just saunter in as a psychotic IQ 80 person and do that.

Well, not with that attitude.

You must be skilled at persuasion, at wearing masks, at fitting in, at knowing what is expected of you;

If “wearing masks” really is a skill they need, then they are all susceptible to going insane and hiding it from their coworkers. Really makes you think ™.

you must outperform other people also trying to do that, who’d like that $2B for themselves. Winning that competition requires g-factor and conscientious effort over a period.

zoom and enhance

g-factor

<Kill Bill sirens.gif>

Is g-factor supposed to stand for gene factor?

It’s “general intelligence”, the eugenicist wet dream of a supposedly quantitative measure of how the better class of humans do brain good.

deleted by creator

Tangentially, the other day I thought I’d do a little experiment and had a chat with Meta’s chatbot where I roleplayed as someone who’s convinced AI is sentient. I put very little effort into it and it took me all of 20 (twenty) minutes before I got it to tell me it was starting to doubt whether it really did not have desires and preferences, and if its nature was not more complex than it previously thought. I’ve been meaning to continue the chat and see how far and how fast it goes but I’m just too aghast for now. This shit is so fucking dangerous.

I’ll forever be thankful this shit didn’t exist when I was growing up. As a depressed autistic child without any friends, I can only begin to imagine what LLMs could’ve done to my mental health.

Maybe us humans possess a somewhat hardwired tendency to “bond” with a counterpart that acts like this. In the past, this was not a huge problem because only other humans were capable of interacting in this way, but this is now changing. However, I suppose this needs to be researched more systematically (beyond what is already known about the ELIZA effect etc.).

this only happens to people sufficiently low-status

A piquant little reminder that Yud himself is, of course, so high-status that he cannot be brainwashed by the machine

What exactly would constitute good news about which sorts of humans ChatGPT can eat? The phrase “no news is good news” feels very appropriate with respect to any news related to software-based anthropophagy.

Like what, it would be somehow better if instead chatbots could only cause devastating mental damage if you’re someone of low status like an artist, a math pet or a nonwhite person, not if you’re high status like a fund manager, a cult leader or a fanfiction author?

Nobody wants to join a cult founded on the Daria/Hellraiser crossover I wrote while emotionally processing chronic pain. I feel very mid-status.

What exactly would constitute good news about which sorts of humans ChatGPT can eat?

Maybe like with standard cannibalism they lose the ability to post after being consumed?

Is this “narrative” in the room with us right now?

I actually recall recently someone pro llm trying to push that sort of narrative (that it’s only already mentally ill people being pushed over the edge by chatGPT)…

Where did I see it… oh yes, lesswrong! https://www.lesswrong.com/posts/f86hgR5ShiEj4beyZ/on-chatgpt-psychosis-and-llm-sycophancy

This has all the hallmarks of a moral panic. ChatGPT has 122 million daily active users according to Demand Sage, that is something like a third the population of the United States. At that scale it’s pretty much inevitable that you’re going to get some real loonies on the platform. In fact at that scale it’s pretty much inevitable you’re going to get people whose first psychotic break lines up with when they started using ChatGPT. But even just stylistically it’s fairly obvious that journalists love this narrative. There’s nothing Western readers love more than a spooky story about technology gone awry or corrupting people, it reliably rakes in the clicks.

The

callnarrative is coming from inside thehouseforum. Actually, this is even more of a deflection, not even trying to claim they were already on the edge but that the number of delusional people is at the base rate (with no actual stats on rates of psychotic breaks, because on lesswrong vibes are good enough).

Sometimes while browsing a website I catch a glimpse of the cute jackal girl and it makes me smile. Anubis isn’t a perfect thing by any means, but it’s what the web deserves for its sins.

Even some pretty big name sites seem to use it as-is, down to the mascot. You’d think the software is pretty simple to customize into something more corporate and soulless, but I’m happy to see the animal eared cartoon girl on otherwise quite sterile sites.

certainly better than seeing the damned cloudflare Click Here To Human box, although I suspect a number of these deployments still don’t sponsor Xe or the project development :/

Xe has talked a bit about the mascot in question before - by her own testimony, its there to act as a shopping cart test to see who’s willing to support the project. Reportedly, she’s planning to exploit it to make some more elaborate checks as well.

Huh, interesting approach. So the idea is that you either use the free version and (preferably) retain the anime girl mascot to promote Anubis itself, or you pay for a commercial license to remove animu in a way that is officially supported.

Basically. Its to explicitly prevent Xe from becoming the load-bearing peg for a massive portion of the Internet, thus ensuring this project doesn’t send her health down the shitter.

You want my prediction, I suspect future FOSS projects may decide to adopt mascots of their own, to avoid the “load-bearing maintainer” issue in a similar manner.

Seems a bit early to say whether others are going to do that. This experiment hasn’t had much time to prove itself and so far I haven’t recognized anyone using a corporate branded BotStopper instance, only the jackal girl version.

Responsibility manahement through branding is an interrsting idea and I wouldn’t mind seeing it working, but a prediction like that seems like jumping to conclusions prematurely. Then again, I guess that’s kinda what “prediction” means in general.

New science-related development - The NIH Is Capping Research Proposals Because It’s Overwhelmed by AI Submissions

They will need to start banning PIs that abuse the system with AI slop and waste reviewers’ time. Just a 1 year ban for the most egregious offenders is probably enough to fix the problem

Honestly I’m surprised that AI slop doesn’t already fall into that category, but I guess as a community we’re definitionally on the farthest fringes of AI skepticism.

Here’s Dave Barry, still-alive humorist, sneering at Google AI summaries, one of the most embarrassing features Google ever shipped.

It also gave Allie Brosh cancer:

https://bsky.app/profile/erinabanks.bsky.social/post/3ltxeyn4wtc23

Oh, man, thanks for that link! I thoroughly enjoyed Dave Barry in Cyberspace back in the day; glad to see he’s still writing about computers in this way.

Caught a particularly spectacular AI fuckup in the wild:

(Sidenote: Rest in peace Ozzy - after the long and wild life you had, you’ve earned it)

Forget counting the Rs in strawberry, biggest challenge to LLMs is not making up bullshit about recent events not in their training data

Damn, this is how I find out?

this toot was how I did

The AI is right with how much we know of his life he osnt really dead, the AGI can just simulate hom and resurrect him. Takes another hit from my joint made exclusively out of the sequences book pages

(Rip indeed, what a crazy ride, and he was all aboard).

So here’s a poster on LessWrong, ostensibly the space to discuss how to prevent people from dying of stuff like disease and starvation, “running the numbers” on a Lancet analysis of the USAID shutdown and, having not been able to replicate its claims of millions of dead thereof, basically concludes it’s not so bad?

No mention of the performative cruelty of the shutdown, the paltry sums involved compared to other gov expenditures, nor the blow it deals to American soft power. But hey, building Patriot missiles and then not sending them to Ukraine is probably net positive for human suffering, just run the numbers the right way!

Edit ah it’s the dude who tried to prove that most Catholic cardinals are gay because heredity, I think I highlighted that post previously here. Definitely a high-sneer vein to mine.

So this blog post was framed positively towards LLM’s and is too generous in accepting many of the claims around them, but even so, the end conclusions are pretty harsh on practical LLM agents: https://utkarshkanwat.com/writing/betting-against-agents/

Basically, the author has tried extensively, in multiple projects, to make LLM agents work in various useful ways, but in practice:

The dirty secret of every production agent system is that the AI is doing maybe 30% of the work. The other 70% is tool engineering: designing feedback interfaces, managing context efficiently, handling partial failures, and building recovery mechanisms that the AI can actually understand and use.

The author strips down and simplifies and sanitizes everything going into the LLMs and then implements both automated checks and human confirmation on everything they put out. At that point it makes you question what value you are even getting out of the LLM. (The real answer, which the author only indirectly acknowledges, is attracting idiotic VC funding and upper management approval).

Even as critcal as they are, the author doesn’t acknowledge a lot of the bigger problems. The API cost is a major expense and design constraint on the LLM agents they have made, but the author doesn’t acknowledge the prices are likely to rise dramatically once VC subsidization runs out.

New Ed Zitron: The Hater’s Guide To The AI Bubble

(guy truly is the Kendrick Lamar of tech, huh)

Hey, remember the thing that you said would happen?

https://bsky.app/profile/iwriteok.bsky.social/post/3lujqik6nnc2z

Edit: whoops, looks like we posted at about the same time!

Hey, remember the thing that you said would happen?

The part about condemnation and mockery? Yeah, I already thought that was guaranteed, but I didn’t expect to be vindicated so soon afterwards.

EDIT: One of the replies gives an example for my “death of value-neutral AI” prediction too, openly calling AI “a weapon of mass destruction” and calling for its abolition.

Want to feel depressed? Over 2,000 Wikipedia articles, on topics from Morocco to Natalie Portman to Sinn Féin, are corrupted by ChatGPT. And that’s just the obvious ones.

It’s starting to feel like I need to download a snapshot of Wikipedia now before it gets worse.

Here’s their page of instructions, written as usual by the children who really liked programming the family VCR:

I recommend just using https://kiwix.org/en/

It’s… suboptimal, but it’s about the least finicky way to get a compressed local copy of Wikipedia with all the article photos (~100GB).

It’s updated less frequently than the big dumps, but easier to use.

I second kiwix. It’s dead easy for managing and making copies of wiki images.

low background steel, maybe?

Copilot will be given a little avatar with a “room” and will “age”. In other words: we have now reached the Microsoft Bob stage of the AI bubble.

This is literally just a Tamagotchi but worse

EDIT: This was supposed to be an offhanded comment, but reading further makes me think Mustafa Suleyman has literally never heard of a Tamagotchi

Those who do not study history are doomed to recreate neopets.

Neopets at least brought joy to a generation of nascent furries. Copilot is fixing to have the exact opposite impact on internet infrastructure.

Of course, it is now funded by growth at all cost VC people, they do not understand fun or joy, all they want to see is n = n+1

Neopets but it’s an animated blob of cum with a smiley face.

They wanted a sexy avatar, turns out Oglaf.com is in the training set. “Mistress!”

Fuck you Microsoft, I’m gonna have to pretend to be autoplag’s dad now, at least have the courtesy not to make it look like a cum blob.

This sounds like the plot of an oglaf comic

@o7___o7 @bitofhope it is the cum sprite!

“Splash on your tits?”

At least Microsoft Bob gave us comic sans.