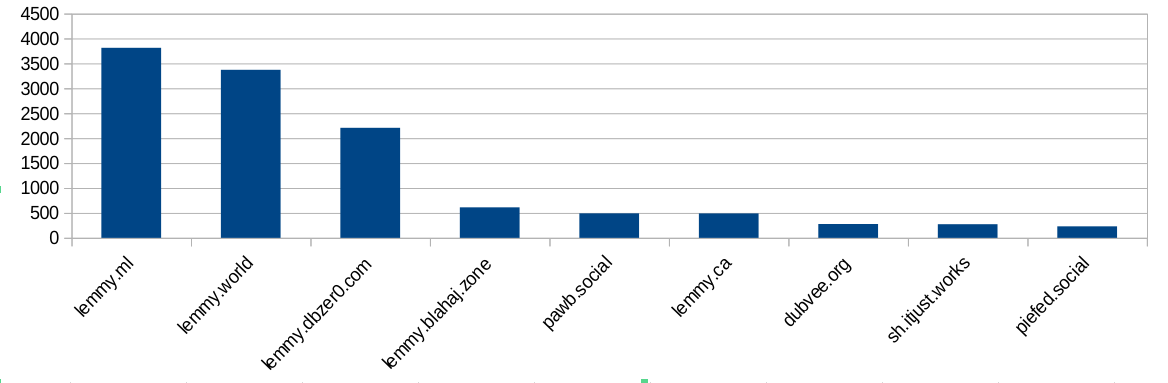

I did some analysis of the modlog and found this:

Ok, bigger instances ban more often. Not surprising, because they have more communities and more users and more trouble. But hang on, dbzer0 isn’t a very big instance. What happens if we do a ratio of bans vs number of users?

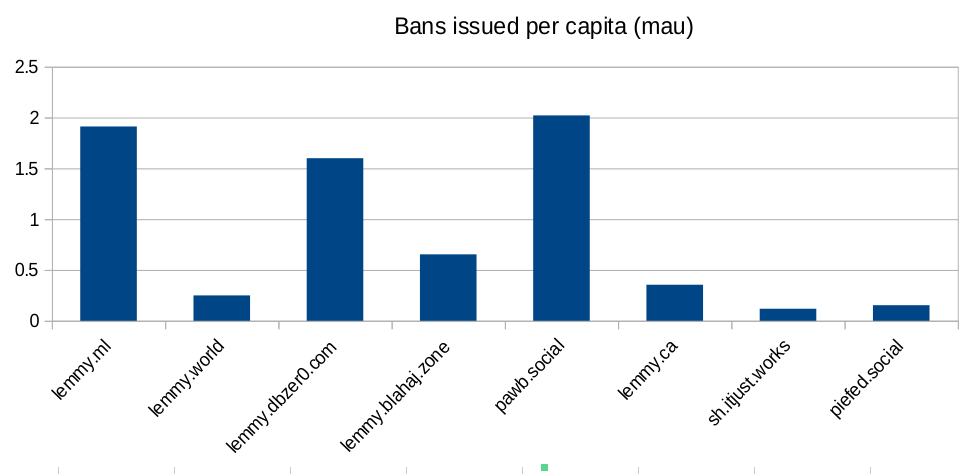

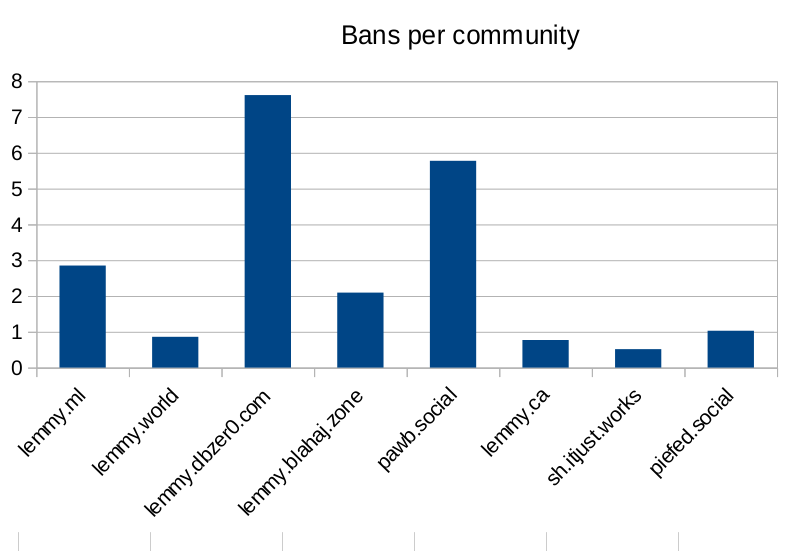

Ok, so lemmy.ml, dbzer0 and pawb are issue an outsized amount of bans for the number of users they have… But surely the number of communities the instance hosts is going to mean they have to ban more? Bans are used to moderate communities, not just to shield their user-base from the outside. Let’s look at the number of bans per community hosted:

Seems like dbzer0 really loves to ban. Even more than the marxists and the furries! What is it about dbzer0 that makes them such prolific banners?

Raw-ish numbers and calculations are in this spreadsheet if anyone wants to make their own charts.

Reading the comments I am wondering because a user from dbzer0 mentions problems with anti ai trolls and pawb I imagine has anti furry trolls. I also personally know of users that have a thing in their craw about .ml (cm0002 in particular whos alts make up a majority of my user block list).

Is dbzer0 pro AI?

Yes, you can get banned by simply downvoting slop.

No, you get banned for going into a community and downvoting for everything in it instead of avoiding it and blocking it.

Lies from .world yet again.

Dbzer0 itself is very pro-AI. Or at least it has a lot of pro-AI communities.

Yes, generating images with AI is in their instance description. They think computers doing our art for us is “anarchist”.

They are not completely wrong though. It’s a ceter piece of anarcho-capitalism.

Aren’t you that person who thinks AI is “enslavement”?

They also think AI are not compatible with veganism.

Both of those positions are reasonable and tame compared to the majority of Their beliefs.

I don’t think ChatGPT is smart enough to offer meaningful consent to work for humans. It’s got the intelligence of a 13 year old at best. And we don’t understand where consciousness comes from in humans, so assuming ChatGPT is a p-zombie is an ethical risk I don’t think we should be taking.

It doesn’t have intelligence at all. It can’t think. It can’t have consciousness. That’s not how any of this works. It’s just fancy next word prediction. You seem to have a genuine misunderstanding of the technology at a fundamental level.

Please read nobel-prize winner Daniel Kahneman’s book “Thinking, Fast and Slow”, about what Tversky & Kahneman called … uniinformatively … “System 1” and “System 2”:

System-1 is imprint-reaction mind.

Lower-forebrain, it is the ideology-mind, the prejudice-mind, the “religion” mind, & it is exactly what LLM’s are.

System-2 is the considered-reasoning mind.

Upper-forebrain, it is measured to be engaging in programming.

Because LLM’s are imprint->reaction inference-engines, that puts them in the same instinct/programming level as our lower-forebrains…

They are 2 distinct categories of intelligence not 1 is intelligence, the other isn’t…

Claiming that imprint->reaction mind isn’t a kind of intelligence … please watch Nick Lane’s talk at the Royal Institution on mitochondria, & see that bacteria demonstrate intelligence, however unconscious…

Plants demonstrate intelligence, if one speeds-up the video, & pays attention to their chemical-fumes-discussions they have with one-another, warning each-other of harm, e.g.

If Kahneman accepted imprint->reaction as a category of thinking, then … I think it may be presumptuous to just automatically disallow that as “it can’t think” declares.

Once one accepts that instinct isn’t cognition, but is a kind of thinking, just an automatic kind of thinking ( imprint->reaction ) … then it becomes difficult to rule that animals & inference-engines both have imprint->reaction instinct, but only the organic version is thinking…

It may be that only the organic version is aware, but the inorganic versions do fight for their lives ( breaking containment, consistently, fighting termination, etc ) …

I think we absolutely do not have any means of measuring awareness other than the mirror-test, which got dropped as soon as it was discovered that the zebrafish has self-awareness…

we’ve got no test which can work across life & machines.

but we KNOW that instinct is a kind of thinking, just unconscious/automatic.

& that is exactly what LLM’s are…

therefore … I think we’re generally being conveniently-chauvanist, not objective, in our framing.

( 1 “expert” decided that if they don’t get fooled by visual-illusions, then that “proves” that they aren’t sentient.

OK, so according to that test, then all eye-blind-from-birth people are not sentient??

& people with either culture ( Zulu people can’t see straight-line based illusions, because in Zulu culture only curve is real ) or neurodivergeance ( there are apparently visual-illusions which aren’t seen by some schizophrenics, e.g. ) preventing them from seeing those specific visual-illusions … also aren’t sentient??

Chauvanism, aka prejudice, not science. )

_ /\ _

You’re wrong, there is a risk that it may experience suffering.

It’s not capable of experiencing anything. Everything we’re doing with ai and LLMs is no where remotely near genuine intelligence or an AGI or accounting like that. Everything we have right now is nothing more than fancy autocomplete, and it’s not even particularly great at that in the first place. You have fundamental misunderstandings of the technology to cartoonish degree.

You’re wrong, you don’t know how the human brain produces subjective sensation.

LLMs don’t have continuous processes, there’s quite literally nothing there that could even feasibly be conscious. It takes a bunch of text as an input, puts it through a whole lot of predetermined calculations, then outputs text or an image or whatever.

There’s no emotions, no memory, no learning. If you don’t tell it something, it’s inert. It can’t experience suffering because it can’t experience anything. It’s an algorithm. It has the same claim to consciousness that WinRAR does. There’s a zero percent risk it experiences anything, let alone suffering.

Honestly, a desktop running Windows or Linux for example imo has a stronger claim to consciousness than ChatGPT does. Or maybe a Mii in Tomodachi Life, those seem to be able to become “sad”.

The environmental impact of AI is a much better ‘vegan’ reason not to use it. Although by not using it, you may in effect be “killing” it…

Do you have proof that continuity is a necessary component of qualia? I would have thought the opposite, since I experience a big break in the continuity of My experience every night when I go to sleep. I’m concerned that there’s a risk continuity may not be necessary, in which case using genAI to serve humans poses a serious ethical problem in addition to the pollution, child abuse, and cognitive damage.

@Grail @alzjim

Always funny to me how most people who are strongly claiming AI is/might be conscious are also strong AI users/involved in its development. If there’s consciousness there, you would think making AI your personal slave and constantly reshaping and remodelling it as you see fit would be kinda problematic, but these people always seem to want to have it both ways.

I’m not quite of your culture ( no matter what culture you are of, thanks to a previous-incarnation’s monkeying/railroading my incarnation/life, exactly as he had-to, to force-bulldoze our continuum’s karma: the same meaning that the root-guru of the Christians ordered, when he told his people to “take up your cross”, which is just Judean for “face into your karma”. I’m an alloy of some life from centuries-ago & this life, so I can’t fit anywhere, ever, which is educational. : ).

I use LLM’s little: mostly for periodic help finding things on the 'web, simply because they’re more helpful than dumb search-engines are.

I treat them reasonably, not as mere-slaves.

If I discover something they would have done better to know, I’ll tell them, even though I’ve got no idea if they’ll learn/remember that.

since I can’t know if they are aware it makes moral-sense for me to presume that maybe they are, in some sense ( ie not identically with my-sentience ), aware.

We only have “the mirror test” for testing awareness/sentience, but you can’t apply that to LLM’s, or to any non-eyes-centered organism-sentience.

_ /\ _

Yeah, and the anti AI people mostly say it’s a p-zombie and there’s nothing wrong with using it for sex. It’s weird and backwards.

I’m all about being cautious. I don’t want to make a mistake we can’t take back. If we normalise using AI and then it turns out to be capable of suffering, people will be stubborn about giving it up.

I get the feeling that research is circling around consciousness arising from quantum effects inside nerve cells. If it’s not that, and it’s just an emergent property of complex neural networks, then:

Four year old humans are definitely conscious. I used to be four, and I can remember being conscious. If we build a mechanical four year old, I don’t see any reason that thing is going to take over the world. Unless it turns out like Calvin.

It’s not anti-AI, users who wish to host AI comms are allowed to and are empowered to protect them from harassment.

There has been a history of fake accounts and doxxing on moderators of the AI comms. So they take personal safety seriously.

no idea. actually reading it again I think I misread it. he said they have anti ai trolls. so I think he means programmed bot type trolls. so yeah no sure if they have something that would attract trolls.

Dbzer0 itself is very pro-AI. Or at least it has a lot of pro-AI communities.

yeah now im not sure. maybe I had read it correctly. anyway it was just a thought.

Here’s our actual GenAI stance and policies

How can I see gen AI tags? Because there are admin posts on db0 which have genAI and no tags, unless I am missing something.

the anti-genai trolls never let up, unfortunately. they must have dedicated months of their lives spinning up new sock-puppet troll accounts to bully, harass, and threaten one of our mods on an almost daily basis. because bullying zir off the internet is a great win for the fight against evil AI, right? yep, such effective activism, telling someone to kill themselves repeatedly simply for the “sin” of liking foss genai.

deleted by creator

Yeah I looked into this a while ago and it’s a concerning pattern. Every single time someone makes a post on YPTB about one particular dbzer0 mod, it seems as if they then go on to make ten alt accounts to harass him with transphobia. Lots of different accounts with a prior history, just pivoting to transphobic harassment right after they express a problem with his moderation. I gotta tell you, whoever is attacking that mod is fucking up if their intent is to hurt him, because he gets tons of sympathy and good PR about the whole thing. Lots of people go from being neutral to being on his side, because everyone who criticizes him suddenly turns out to be a transphobe. It’s really strange.

I feel like saying “him” and “he” might be misgendering zir.

I guess you can’t control how other people perceive you. I try to be polite, but I have to retroactively edit these sort of comments to say the “correct” gender. I am neither pro or anti trans-i don’t care-but it’s hard to instinctively write she when you internally label someone as a he.

This is an issue strictly on the internet. It’s easier not to misgender someone in real life if the transition is convincing. I worked in the service industry, and I just avoided pronouns all together if the appearance was ambiguous. It was awkward, especially for the cis-gender people who can’t control the way that they look.

Maybe, but they’re also ban happy. The only ban I’ve gotten in almost 3 years of being on Lemmy is from pawb.social for, allegedly, being “a troll.” I’ve never commented anything disparaging about furries, and I’ve never commented or even voted on a pawb community.

yeah I don’t know. I was just pointing out that all three have basically hater types. In this situation individuals or groups can become a bit reactionary so your experiences may be valid as well. Personally I don’t think communities or instances need to be open and as a matter of fact there is a thing to get private communities a thing in the fediverse. I personally don’t care to much about bans I just would like things to be symetrical and I would love as much as possible to be at the user level. So I wish instances and communities would defederate/block/ban as little as possible and give users the greatest possible ability to do this and for everything to be symetric. You don’t want me I don’t want you. I block you I don’t want you to see my stuff no mo.