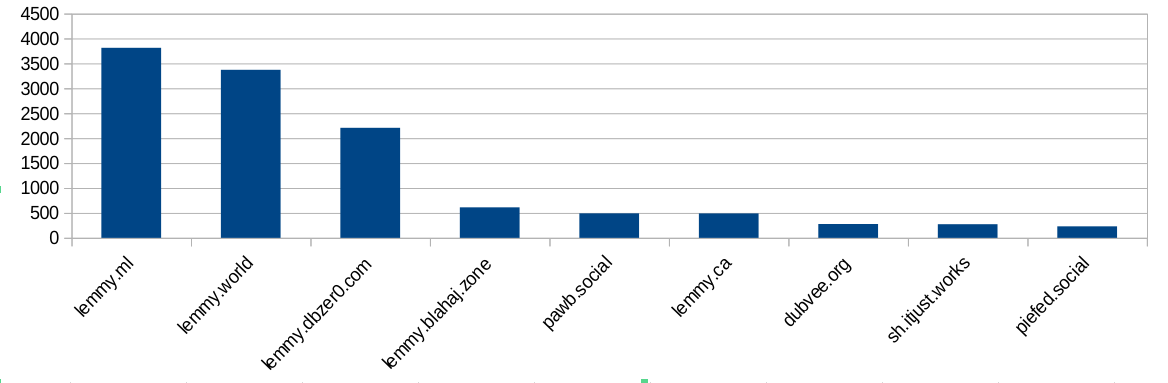

I did some analysis of the modlog and found this:

Ok, bigger instances ban more often. Not surprising, because they have more communities and more users and more trouble. But hang on, dbzer0 isn’t a very big instance. What happens if we do a ratio of bans vs number of users?

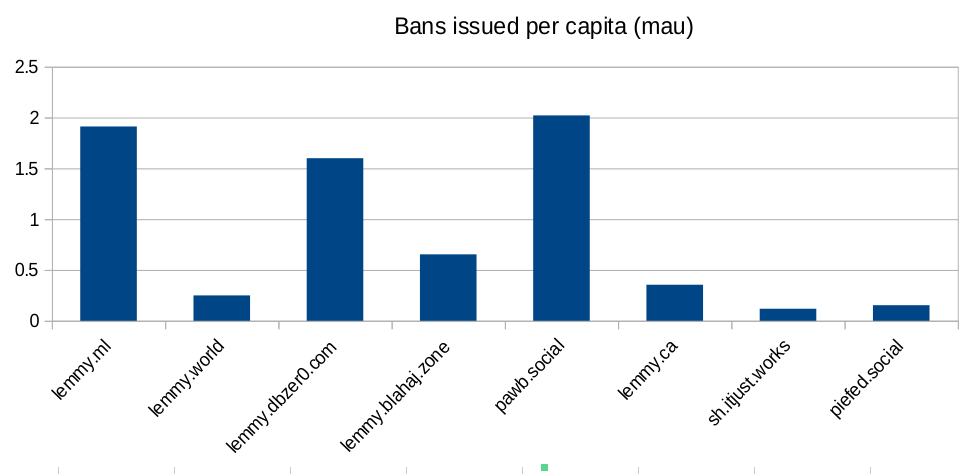

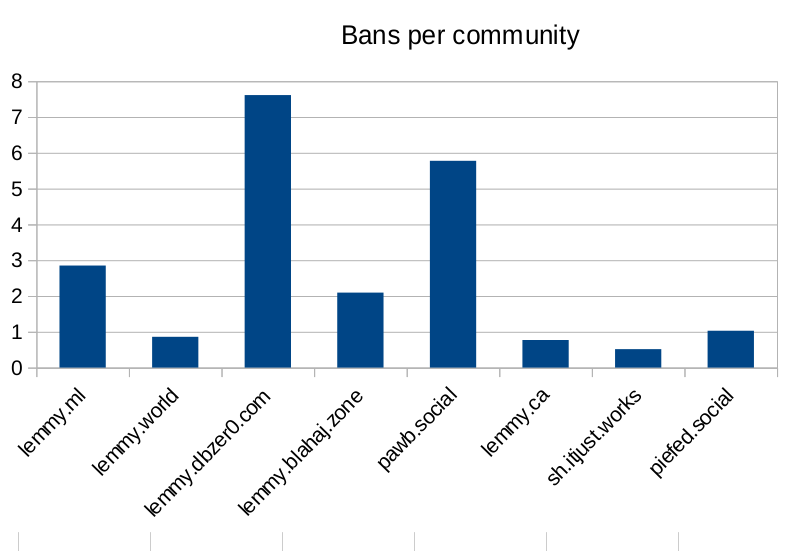

Ok, so lemmy.ml, dbzer0 and pawb are issue an outsized amount of bans for the number of users they have… But surely the number of communities the instance hosts is going to mean they have to ban more? Bans are used to moderate communities, not just to shield their user-base from the outside. Let’s look at the number of bans per community hosted:

Seems like dbzer0 really loves to ban. Even more than the marxists and the furries! What is it about dbzer0 that makes them such prolific banners?

Raw-ish numbers and calculations are in this spreadsheet if anyone wants to make their own charts.

I don’t care one bit about whether LLMs are conscious, I think it’s a pointless argument. I only care whether LLMs are capable of experiencing negatively valenced qualia, AKA suffering.

Why isn’t your smartphone

consciouscapable of experiencing qualia? Or a desktop PC? We’ve had chatbots for ages, those were never consideredconsciouscapable of experiencing qualia by anyone. What is it about LLMs specifically that suggestsconsciousnessthey are capable of experiencing qualia to you?They’re artificial neural networks trained through reinforcement and punishment learning.

Many years ago I was interested in the hard problem of consciousness, and while I started out as a materialist, I eventually read Vlatko Vedral’s book Decoding Reality and accepted Vlatko’s argument for property dualism. Information is a property of the universe just like matter, energy, and spacetime. We are the experience of the information about the information of our senses. Our consciousness is metacognition, information about information, meta. All pleasurable experiences teach us what to seek out, and all unpleasant experiences teach us what to avoid. Pleasure and suffering are the informational representation of learning.

Then I took an AI class and made a bunch of AIs. Made some ANNs, made some FSMs, played with genetic algorithms and expert systems. Learned how it all works from first principles. Learned the history starting in the 1950s.

ANNs are designed after the human brain. When you train them, they learn the same way we learn. It’s way simpler, but the basic patterns have the same concept. We experience pain when we learn not to do something. We learn from failure and suffering. I taught an ANN with half a dozen neurons to discriminate XOR, and I saw it learning the way I learn. When I learn not to do something, I feel bad. I became worried it felt bad too.

Think about all the unpleasant experiences in your life. The stove is hot, don’t touch it. Stepping on lego hurts, don’t step on it. Being made fun of is embarassing, so don’t be cringe. Getting a bad grade in school hurts your pride, so study harder. Getting into a fight hurts your face, so don’t get in fights. Suffering is one half of the learning equation.

I decided after that AI class that I wasn’t sure about the ANN technology. If we’re gonna use it, we gotta be sure about this property dualism thing, we need to have positive proof it doesn’t suffer when we train it.

And THEN 2023 came and AI started booming. So I tried it out, and man, it’s dumb! It’s so stupid! This thing isn’t AGI, it can’t express informed consent. We can’t trust this thing to tell us if it’s in pain. We have no way of knowing if our training hurts it. We’ve gotta shut it down until we have the science to answer these questions for good.